Improving the Account Manager User Experience

Project Context

The internal eManager platform, integral to the Account Services team’s daily operations, was experiencing significant usability and performance challenges. These issues were negatively impacting team productivity, increasing user frustration, and posing risks to business efficiency and customer satisfaction. The platform’s core functionalities were intact but marred by workflow inefficiencies, scattered information architecture, unreliable reporting, and critical feature gaps.

The primary goal of this research was to identify key pain points and opportunities for improvement within the eManager system, focusing on enhancing user experience and operational efficiency for the Account Services team. By understanding the lived experiences of frontline users, the project aimed to inform a user-centric redesign and prioritize technical improvements that would empower the team and improve overall customer service.

My Role

As the UX Researcher on this project, I was responsible for designing and executing the research plan, conducting in-depth user interviews, analyzing qualitative data, and synthesizing insights into actionable recommendations. I collaborated closely with the Account Services team to gather authentic user feedback and worked alongside Product Management and Engineering teams to ensure findings were aligned with technical feasibility and business priorities. Additionally, I facilitated workshops to validate findings and helped integrate research outcomes into the product roadmap.

Research Methodology

Given the exploratory nature of the project and the need for deep understanding of complex workflows, I chose qualitative user interviews as the primary research method. This approach allowed for detailed exploration of user experiences, uncovering nuanced pain points and contextual factors that quantitative methods might miss.

To complement interviews, I also reviewed system usage logs and existing support tickets to triangulate findings and validate user-reported issues. This mixed-methods approach ensured a comprehensive understanding of both user perceptions and system performance.

Research Process

Recruitment and Panel Management

I collaborated with HR and team leads to identify and recruit six Account Services team members representing a range of roles and experience levels. We offered no incentive, as complied with company policies, however all participants were adamant that the best incentive would be the outcome of a better system as a result of the study.

Panel records were securely maintained, and all participants provided informed consent, with clear communication about data usage and confidentiality. Data security protocols were strictly followed, including encrypted storage and anonymization of sensitive information.

Data Collection

I conducted one-on-one, semi-structured interviews lasting approximately 60 minutes each. Interviews were recorded (with consent) and transcribed for detailed analysis. The interview guide focused on daily workflows, pain points, feature usage, and suggestions for improvement.

Data Analysis

Using thematic analysis, I coded transcripts to identify recurring themes and prioritized issues based on severity and frequency. I also mapped workflows to visualize inefficiencies and information fragmentation.

Regular check-ins with stakeholders ensured alignment and allowed iterative refinement of insights. I maintained ethical guardrails by continuously monitoring for bias and ensuring participant voices were authentically represented.

Key Findings

The research surfaced several critical insights:

Performance Issues: The system exhibited significant lag and instability, severely hindering productivity.

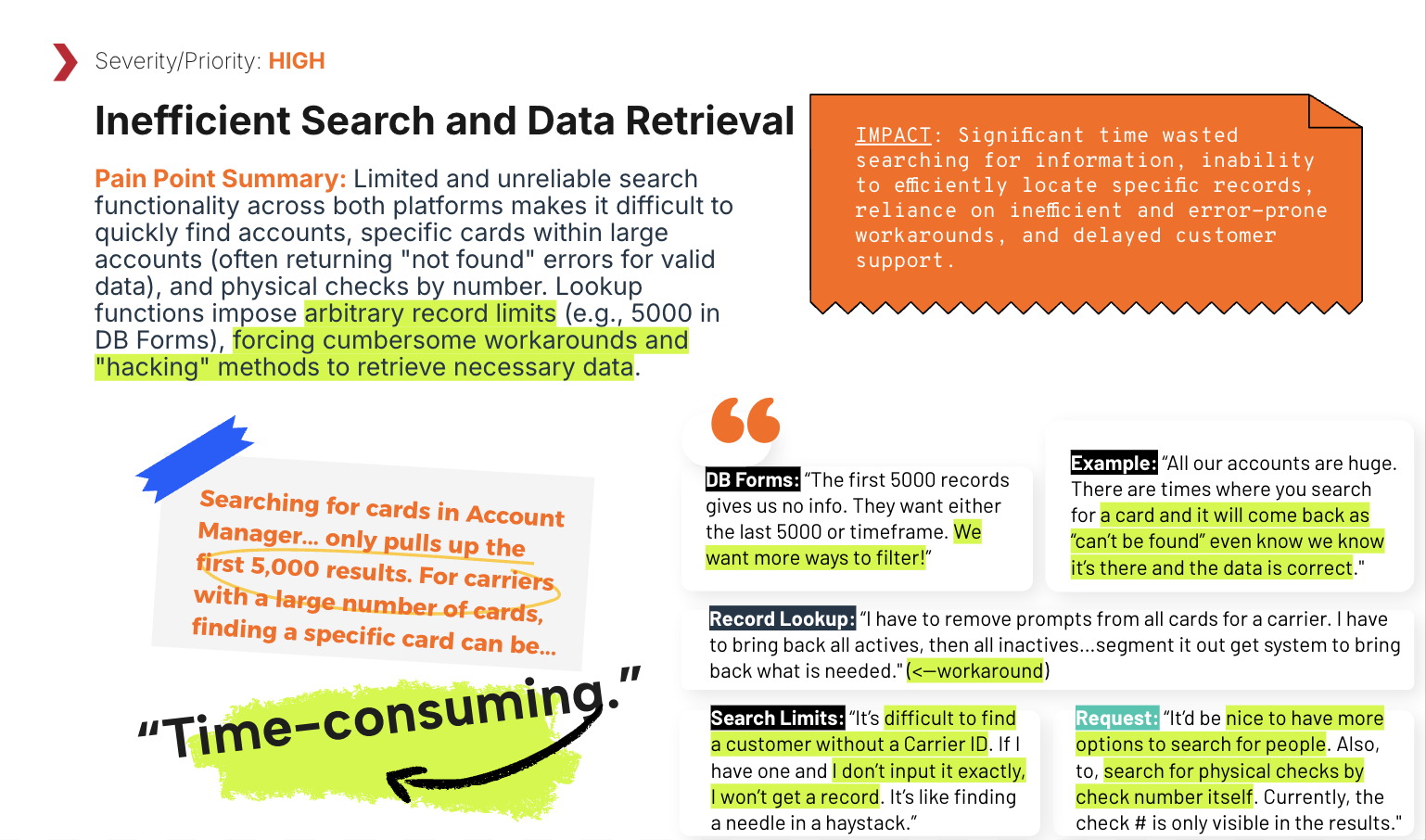

Information Disorganization: Critical account setup information was fragmented across multiple tabs, causing inefficient workflows and excessive manual data handling.

Reporting Reliability: Reports frequently failed to generate completely or contained inaccurate data, undermining trust and usability.

Lack of In-System Help: Absence of tooltips or contextual guidance increased user frustration and reliance on external resources.

Critical Feature Gaps: Areas such as fraud management, authentication, and emergency network adjustments were identified as high-priority pain points requiring immediate attention.

Opportunities for Self-Service: Introducing self-service options for common tasks (e.g., card un-suspension, PIN resets) could reduce workload and empower customers.

Impact

The research directly influenced the product roadmap and design decisions:

Prioritization of Critical Fixes: System performance and reporting reliability were flagged as “Critical Priority” areas, prompting immediate technical deep dives and resource allocation.

Workflow Redesign: Recommendations to consolidate related account setup information and enhance search capabilities were adopted to streamline user tasks.

Self-Service Features: Proposed development of self-service options to empower customers and reduce support load.

Emergency Feature Development: Initiated planning for a mass network adjustment tool to improve business continuity during crises.

Stakeholder Alignment: Facilitated cross-team workshops to ensure shared understanding and commitment to user-centered improvements.

Post-implementation metrics showed a measurable reduction in support calls related to account setup and report issues, increased task completion rates, and improved internal Net Promoter Scores from the Account Services team.

Deliverables

Interview Guides and Research Templates: Standardized materials for consistent data collection and future reuse.

Thematic Analysis Report with Prioritization Matrix: Detailed documentation of pain points, user quotes, and thematic maps with framework to categorize issues by severity and business impact.

User Journey Maps with Pain Point Summaries: Visualizations of current workflows highlighting inefficiencies and areas for improvement with categorized issues and severity rating.

Presentation Decks: Materials used for stakeholder workshops and roadmap integration discussions.

Reflections

This project reinforced the value of engaging frontline users to uncover authentic challenges and opportunities often invisible to product teams. The qualitative approach provided rich insights that quantitative metrics alone could not reveal. In future projects, I would incorporate usability testing alongside interviews to observe user interactions more directly and validate proposed design solutions iteratively. Additionally, expanding research to include customer perspectives, as suggested by carrier collaboration opportunities, could further enrich understanding and drive holistic improvements.

Additionally, as a researcher, I encountered several challenges throughout the study worth mentioning, as they required that I adapt methods and maintain strong stakeholder engagement:

Critical account setup information was scattered across multiple tabs and sections within the eManager system, making it difficult to fully understand and map user workflows.

Those frequent slowness, timeouts, and unreliable reporting complicated efforts to observe consistent user interactions and gather reliable data.

The absence of tooltips or help screens meant users often relied on external knowledge or guesswork, which made it challenging to distinguish between system limitations and user misunderstandings.

Translating qualitative user insights into actionable recommendations that aligned with technical feasibility and business priorities were sometimes….erm, difficult.