* Two challenges in particular that I kept in mind throughout.

** from https://conjointly.com/blog/willingness-to-pay/

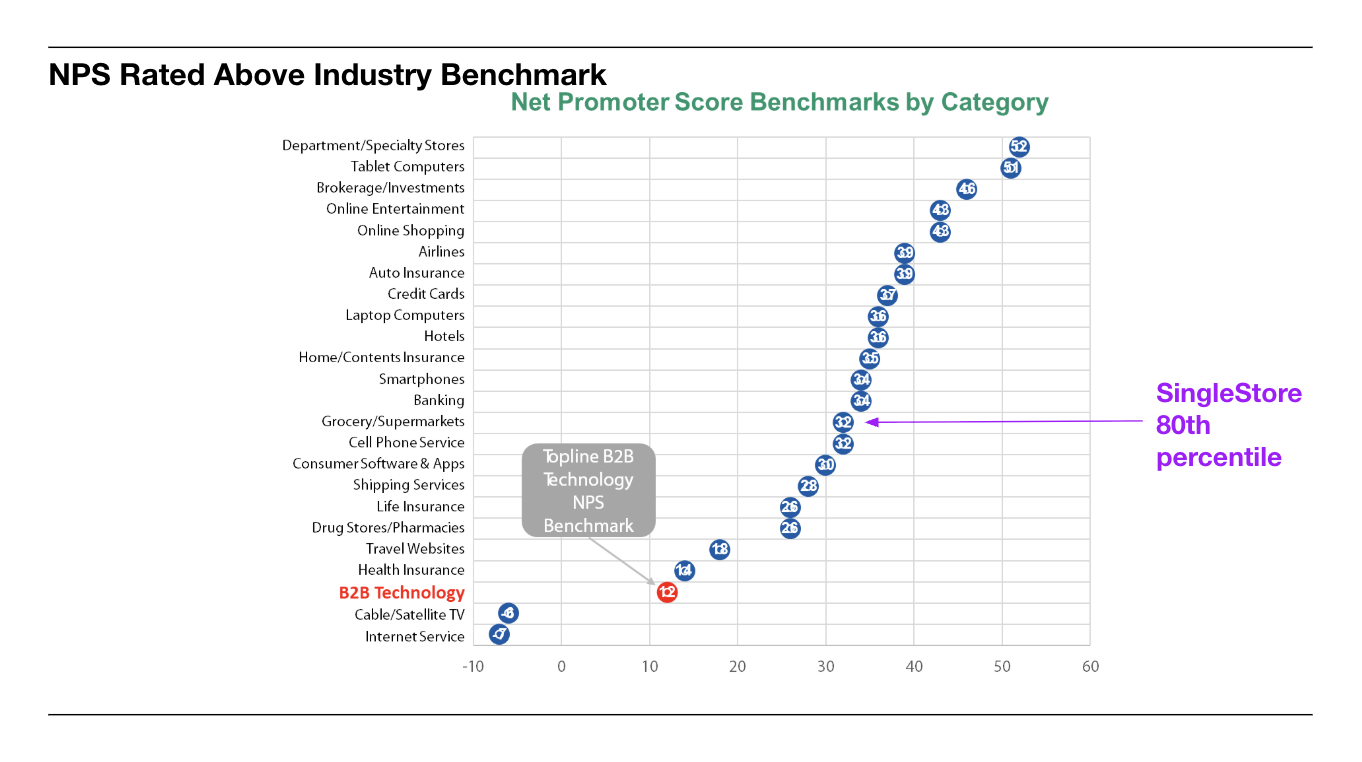

** see https://toplinestrategy.com/how-good-or-bad-is-my-net-promoter-score/

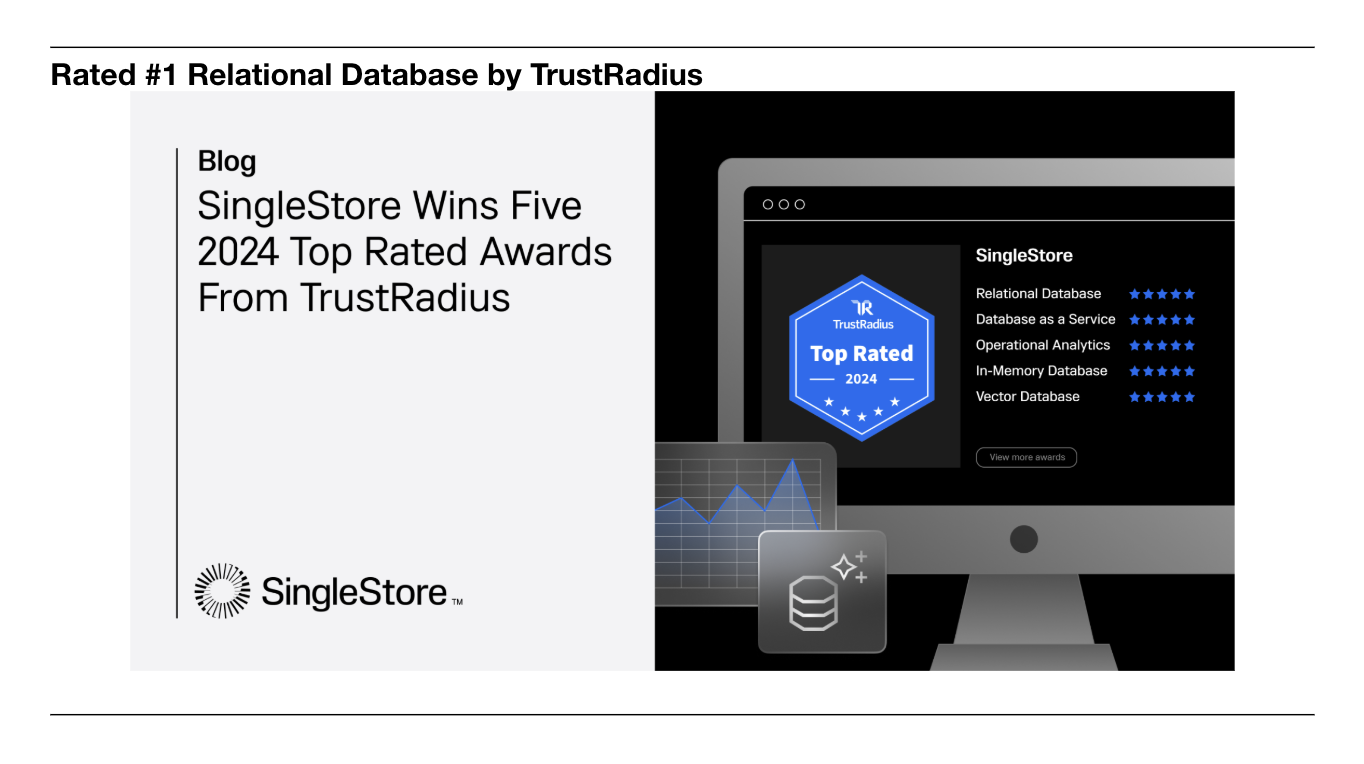

** see https://www.singlestore.com/blog/singlestore-wins-five-2024-top-rated-awards-from-trustradius/